Artificial intelligence can become a powerful study partner, but only when students use it to strengthen thinking rather than replace it.

The question of whether students should use ChatGPT in their studies is no longer theoretical. Many already do. They use it to explain difficult concepts, summarize readings, draft outlines, translate passages, check grammar, prepare for exams and generate ideas for assignments. Some use it responsibly. Others use it to avoid doing the work. This difference matters because ChatGPT is not simply a shortcut or a threat. It is a tool, and like any powerful tool, its value depends on how it is used.

Education has always changed with technology. Calculators changed mathematics classrooms. Search engines changed research. Online videos changed how students review lessons outside school. ChatGPT and similar AI systems represent another shift: they can respond in natural language, adapt explanations, create examples and interact with students in a conversational way. This makes them more flexible than a textbook and more immediate than waiting for office hours or a tutoring session.

The strongest argument for allowing students to use ChatGPT is that it can support personalized learning. In a classroom, one teacher may need to guide dozens of students at different levels. Some understand a topic quickly, while others need more examples or a different explanation. ChatGPT can provide additional practice and alternative explanations at any hour. A student who is embarrassed to ask a basic question in class may feel more comfortable asking an AI tool to explain it step by step.

This can be especially useful in subjects that build gradually, such as mathematics, science, coding and foreign languages. A student can ask why a formula works, request a simpler example, compare two concepts or practice vocabulary in context. Instead of passively reading notes, the student can interact with the material. When used well, ChatGPT can help students move from confusion to curiosity.

It can also support writing, but this is where the risks become sharper. Used properly, ChatGPT can help a student brainstorm, organize an outline, identify unclear sentences or learn how to improve structure. It can act like a writing coach that gives feedback before the final draft. Used dishonestly, however, it can produce an essay that the student submits as original work. In that case, the tool does not support learning. It replaces it.

The difference between assistance and cheating must be made clear. Asking ChatGPT to explain the causes of climate change is different from asking it to write a full essay and submitting that essay under one’s own name. Asking for feedback on a paragraph is different from copying an AI-generated response into homework. Schools need clear rules, but students also need ethical judgment. Academic integrity is not only about avoiding punishment. It is about building the skills education is supposed to develop.

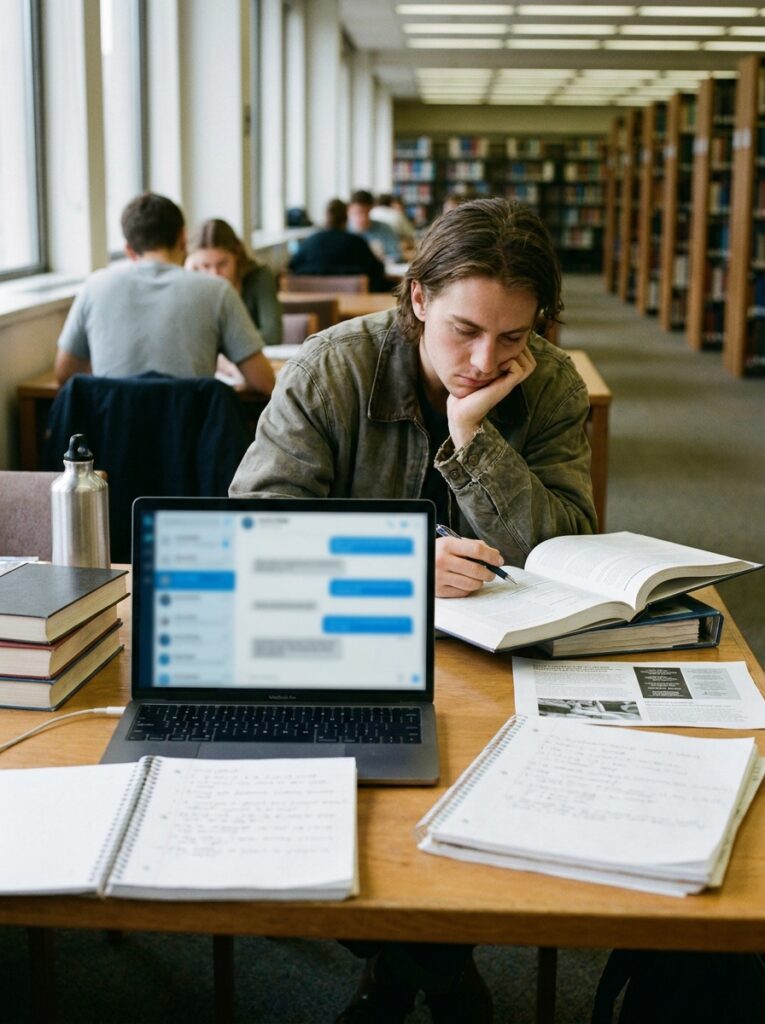

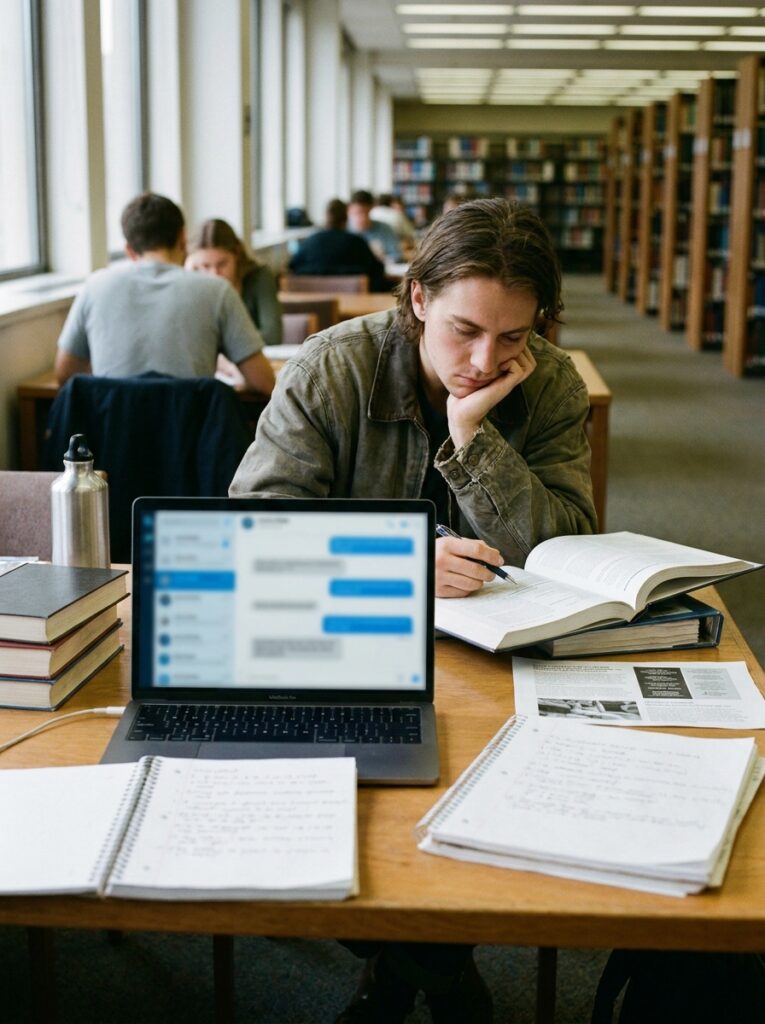

One major risk is dependency. If students turn to ChatGPT before trying to think, read or solve problems themselves, they may weaken their own learning process. Struggle is not a failure in education; it is part of how understanding forms. A student who immediately asks AI for the answer may receive a polished explanation but miss the mental effort that creates memory and skill. The danger is not that ChatGPT knows too much. The danger is that students may allow themselves to do too little.

Another risk is false confidence. ChatGPT can produce answers that sound fluent and convincing even when they are incomplete, outdated or wrong. Students may assume that a confident answer is an accurate answer. This is especially dangerous in research, history, science, law, medicine or any subject where precision matters. AI should not be treated as an unquestionable authority. It should be treated as a starting point that requires verification.

For this reason, students should learn to check AI outputs against reliable sources: textbooks, academic articles, official data, teacher instructions and credible news organizations. If ChatGPT provides a fact, date, quotation or citation, the student should verify it. If it gives a solution to a math problem, the student should work through the steps. If it summarizes a reading, the student should still read the original. AI can help organize learning, but it cannot replace the responsibility to know what is true.

Privacy is another concern. Students should avoid entering sensitive personal information, private school documents, confidential research data or details about other people. Young users may not fully understand how digital tools process information or how copied material may create risks. Responsible AI use requires not only academic honesty but also data caution.

There is also a fairness issue. Not all students have equal access to advanced tools, fast internet, paid subscriptions or teachers who understand AI. If schools allow AI without guidance, students with more resources may gain an advantage while others are left behind. If schools ban AI completely, students may still use it secretly, widening the gap between official rules and real behavior. A better approach is guided access, transparent expectations and AI literacy for both students and teachers.

Teachers remain essential. ChatGPT can explain, suggest and simulate conversation, but it does not know a student in the same way a good teacher can. It cannot fully understand classroom context, personal growth, emotional struggles or the goals of a specific course. The best use of AI does not remove teachers from education. It gives them another tool while preserving their role as mentors, evaluators and human guides.

Students should use ChatGPT in ways that make them more active, not more passive. A good method is to attempt the task first, then use AI for feedback. For example, a student can solve a problem and ask ChatGPT to identify mistakes. They can write a paragraph and ask how to make the argument clearer. They can ask for quiz questions on a topic, then answer them without help. They can ask the tool to challenge their reasoning rather than simply agree.

Students can also use ChatGPT to learn how to ask better questions. A vague request often produces a vague answer. A precise question forces the student to define what they do not understand. This is valuable in itself. Learning improves when students can identify the gap between what they know and what they need to know.

A responsible student might say: “Explain this concept at a high-school level,” “Give me three practice questions but do not show the answers yet,” “Point out weaknesses in my argument,” “Help me compare these two theories,” or “Ask me questions to test whether I understand this chapter.” These uses keep the student involved. The AI becomes a tutor, not a ghostwriter.

Schools should also update assessment. If homework can be completed entirely by AI, educators may need to design assignments that show process, reflection and personal reasoning. Oral defenses, in-class writing, project logs, drafts, peer discussion and applied problem-solving can help reveal what students actually understand. The goal should not be to catch students using AI, but to create learning environments where honest use is possible and dishonest use is less attractive.

Parents also have a role. They should not treat ChatGPT as either a miracle teacher or a dangerous machine. Instead, they can ask children how they use it, whether their school allows it and what parts of the work are their own. Families can help students see AI as a study support, not a substitute for effort.

So should students use ChatGPT? Yes, but not without rules, reflection and responsibility. Used well, it can explain difficult material, support language learning, improve writing, encourage practice and make education more accessible. Used poorly, it can encourage cheating, shallow thinking, overconfidence and dependence.

The future of education will not be built by pretending AI does not exist. Nor should it be built by surrendering learning to machines. The right question is not whether students should use ChatGPT, but how they can use it in a way that protects honesty and strengthens understanding.

A student who uses ChatGPT to avoid thinking may finish an assignment faster but learn less. A student who uses it to ask better questions, test ideas, correct mistakes and deepen understanding may become a stronger learner. The tool is powerful, but the purpose of education remains human: to develop knowledge, judgment, creativity and responsibility.”””